Baptisms of fire

How human + agents execute a regulatory workflow (not the LinkedIn version)

Courtesy of Pixabay

Intro

Today I’m launching OpenCRO — Real-world MedTech Cybersecurity,

OpenCRO is an expert-led, agent-operated company.

It’s been a journey. 20 years. 42 MedTech companies. 14 FDA clearances. 1 De Novo world speed record.

After conversations with many of you over the past 3 months about why MedTech companies die, I decided to do something about it.

Fixed outcome. Fixed price.

Not hours.

In a post-management consulting world, that’s the new delivery standard.

Why FDA Cyber first? And not an AI-agent story?

Because the outcome is far more important than the buzz.

AI-agents are hot today. FDA Cyber is the gate to your commercialization tomorrow — and the robustness of your security countermeasures the day after.

The MedTech executives I talk to are hungry for the depth, discipline and infrastructure required to achieve and sustain robust cybersecurity and cyber resilience.

Ransomware and supply-chain attacks shape how hospitals evaluate device vendors. FDA guidance is more explicit, documentation expectations are higher, review questions are deeper and more technical.

I built OpenCRO with my expertise in the security and privacy space in medical devices/software as a medical device/digital therapeutics/AI-based diagnostics and consumer digital health applications. I was inspired by Practical Threat Analysis, a Microsoft desktop tool that we used at Open Solutions, my security and privacy consultancy back in the day.

OpenCRO integrates a human workflow and an agentic-AI engine I call the Pattern Factory. Pattern Factory agents write reports, extract design patterns and anti-design patterns from content, reasoning over causal flows. Other agents generate threat models from user stories.

In this essay, I describe a debrief of the first mission that OpenCRO executed in January 2026.

Mission Objective

Deliver FDA Cyber threat analysis compliant with 2026 guidance, on time, without regulatory deficiency.

Outcome

Delivered on time. No known structural deficiencies. 11 iterations required.

Cost

2 hours in workshop with customer writing cards

4 hours of model iterations

2 hours in RA/QA manager review

3 hours in FDA reviewer simulation.

9 hours of rework.

What happened (Facts/data)

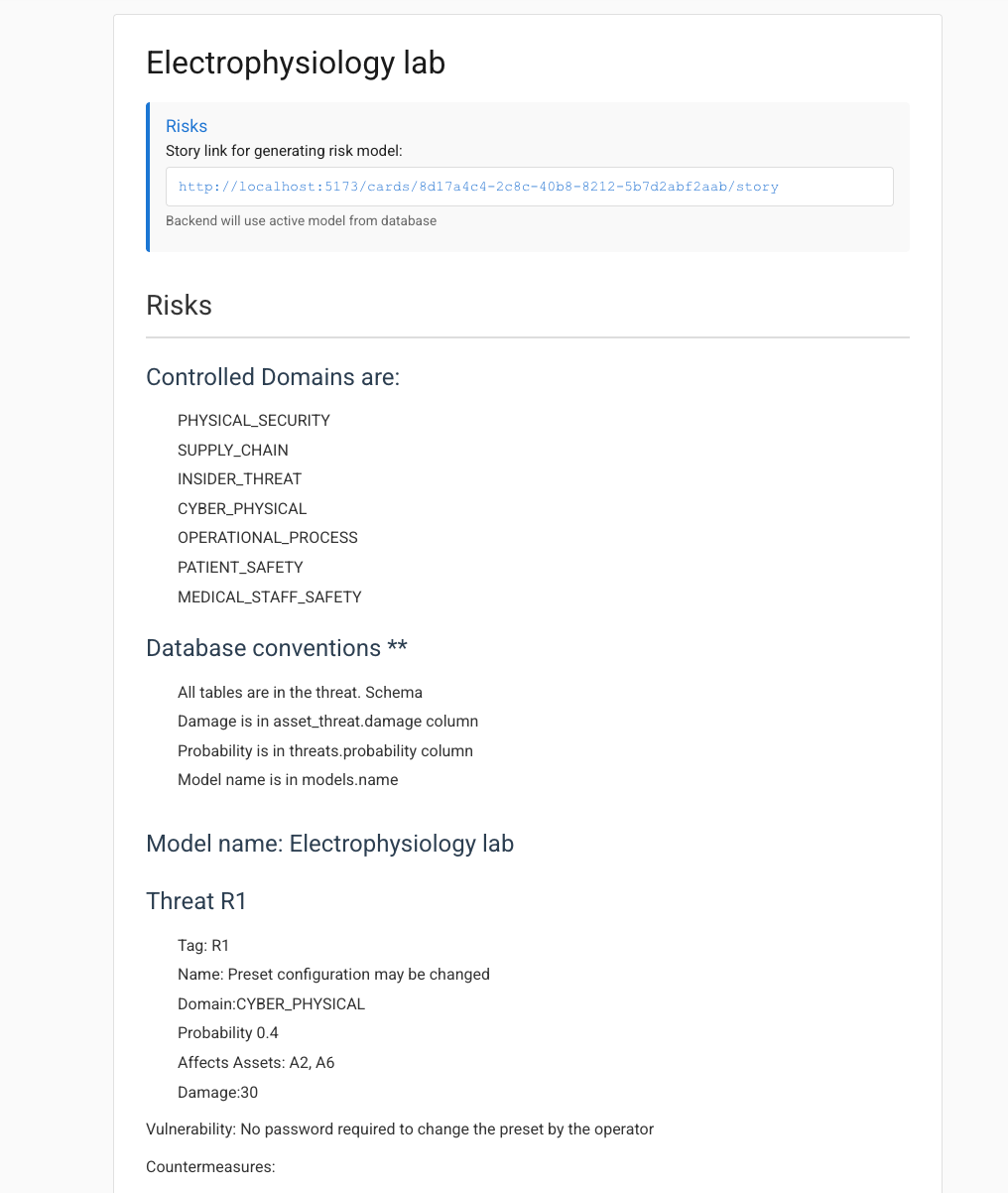

A customer contracted with OpenCRO to perform an FDA Cyber threat analysis for their electrophysiology lab system.

We performed a IRL data collection workshop with the team, where they wrote down risk scenarios on paper cards

Paper cards were scanned and converted into a user story in markdown format.

The Pattern Factory agents generated a threat model from the story.

The process worked reliably and took about 30s to populate the threat master tables and relationships in an iteration.

I wrote a spec, and the Pattern Factory agents generated the reports (manually imported into Google sheets and linked into the google doc of the report).

I discovered a number of weaknesses in my process and in the code. The report went through 11 iterations with 3 iterations of review using 2 language models (chat and Claude) in simulated roles of FDA reviewers. The final report was released on time and is labeled V4.

We’re using Markdown to write stories like this to make it easy for the agents to generate the threat model entities in the PostgreSQL database.

Why did it happen? (Gap between planning and execution)

Not properly understanding of threats to patient and medical user safety, for example the ‘Preset configuration may be changed’ threat scenario. The threat was incorrectly classified as attacking patient safety, when in fact it’s an application function used to configure the device for procedures.

Not having integral attacker type support in the agent flow. My development decision to postpone implementation of attacker types (defined in the data model but not implemented in the agent flow) resulted in a lack of clarity regarding the classification of threats and in retrospect, I should have invested the 4 hours work to implement attacker types in flow.

Not reading the latest guidance thoroughly - relying on LLM interpretation resulted in unnecessary work on my side to figure out the latest guidance, for example residual risk without financial value, and vulnerability exploitability.

At one point I started fine tuning the model by surgical changes in the UI and (in 1 case) the PSQL command line which resulted in a lot of manual work that could have (and should have) been saved by creating a new card version and regenerating the threat model with the generate model flow.

Primary root cause

Starting the project before reading the device’s intended use.

Intended use defines safety domain, and safety domain defines asset classification. Without deep understanding of intended use, the threat model drifted.

What surprised me

An attack vector with a custom exploit in a software update package emerged late in the game.

The current device system architecture with its 21 security countermeasures mitigated 97% of the threats in the model.

The model generation pipeline was reliable during the pressure of iterations

The bottleneck in the process was not the agent model generation speed (30s) but modeling discipline.

Improvements must focus on modeling discipline (intended use, domain locking, attacker typing) rather than increasing agent complexity.

How can we improve OpenCRO for future missions?

Process improvements

Establish the ‘Category’ of the project model as part of the standard terms of conditions of the engagement (FDA Cyber, Business threat modeling, HIPAA, GDPR etc)

Understand intended use of the device before modeling in order to properly establish roles of safety, efficacy, data integrity and data privacy in the model.

Phase 1 - manual reading to deepen understanding by analyst and discussion with customer RA/QA.

Phase 2 - agent validates the card story for consistency and completeness vis-a-vis the intended use.

Classify attacker types as part of the workshop with the customer

After the initial generation of the model, validate the MD card story with the customer team. In particular - validate the DOMAIN tagging in the cards with the customer team, since this will help later on with sanity checks such as privacy threats not tagged in PRIVACY domain.

Mandatory meeting with CEO to review and sign off the final report version.

No manual DB edits after model generation. All changes must originate from a revised card story and model regeneration.

Code/AI agent improvements

Implement the attacker types and attacker_threat relationships in the MD card story, generate agent flow, and upsert procedure (they’re already in the data model)

Test if system properly supports multiple vulnerabilities per threat, if not fix bugs

Add dry run feature to generate model from story link (basically same agent flow as extract but stopping before calling the upsert procedure).

Improve model generation traceability:

Alter column models.version from text to integer column incremented by the upsert procedure every time the model is re-gened.

Add column models.story_link text - story_link used to generate the model entities

The edit model currently supports creating a duplicate new model functionality (which is convenient). Better to have a ‘Duplicate model assets’ function as well instead of having to manually re-enter the Model assets

Add delete entities before model generation to the upsert (did it manually this time which is a bit dangerous)

Use the model ‘Category’ to support different regulatory/business categories:

Different report sets for different categories (FDA Cyber Pre-market guidance, HIPAA Security Rule, GDPR, Business threat modeling etc). The tacit assumption is that the analytical model is invariant across all categories but the reports may differ (for example - VaR in Business threat modeling and exploitability and residual risk in FDA Cyber)

Parametrize binning of mode qualitative valuations (low, medium, high etc) instead of hard coding in the PG logical views.

Implement view groups - this will enable use to have a unique group of views for each category (FDA Cyber, Business threat modeling, HIPAA, GDPR etc)

Use the system to log activities in order to perform time utilization analysis for customer-facing and back-office activities (Data collection, bug fixes and enhancements to Models, Cards, Assets, Views, Agents, UI, backend). Use the RULES in order to create views of time utilization.

Outro

OpenCRO delivered on time. More than speed, it delivered depth of analysis of residual risk and exploitability.

In 2026, OpenCRO provides discipline and depth that generalists can’t deliver.

Reply to this post and I’ll send you a free copy of my book “22 Anti-patterns that kill companies.”

If you, a colleague, or a client are submitting a device to FDA in the next 30-90 days — book a call and we’ll see if you’re a good fit.