Dysfunctional software is risky software

Great software needs great teams, most teams are like dysfunctional families.

Quote courtesy of Jim McCarthy, Dynamics of Software Development

Intro

When you were a kid, did you ever tell your mom,

“Dad already agreed…”

And your sister would go to your dad,

“Mom’s letting Danny stay home from school—why can’t I?”

If you played it right, the 2 of you got away with murder.

Today, writing software is a family affair with two parents: human and AI.

Software is written in a dialog between them.

The dialog between machines and humans started 200 years before ChatGPT.

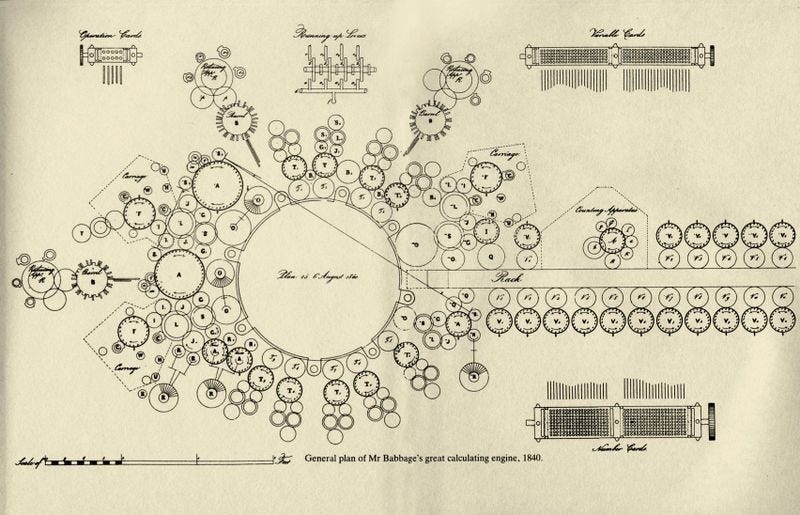

Charles Babbage designed his Analytical Engine in 1837. It was never built, but Ada Lovelace wrote a program for it in 1843. Ada was a colorful character, daughter of Lord Byron, directed by her mother to learn science and mathematics.

Looking at her program, it is surprisingly close to what we do today.

Loops. Named variables. Structure.

But Ada saw something deeper.

She imagined machines that could do more than compute — write music, manipulate symbols, process abstract ideas.

Great software comes from that fusion.

Today, great software fuses human intent, taste and judgment in a dialog with AI execution, expansion, and iteration.

Today, I’ll show you how that fusion works in practice:

One case where the human dominates

One case where the AI dominates

Great software has two parents

In the past year, I’ve spent less time writing code and more time writing specifications for AI.

I can ask Warp Code if the model is a good fit for the job—and get an intelligent answer.

Here’s what that looks like in practice:

/agent what model are we using

You’re using Claude 3.5 Haiku.

for an application to plan tax-optimized liquidation of stocks and optimized lots should I use a different model?

No, Claude 3.5 Haiku is capable of handling tax-optimized stock liquidation planning. It can work with:

• Tax lot identification strategies (FIFO, LIFO, highest cost, specific ID)

• Capital gains calculations (short-term vs long-term)

• Tax-loss harvesting logic

For a production application, the main considerations are:

1. Accuracy over speed: Ensure thorough validation of tax calculations and compliance rules

2. User responsibility: Include disclaimers that users should consult a tax professional

3. Data security: Handle sensitive financial data securely (encrypted storage, HTTPS, etc.)

4. Testing: Comprehensive test cases for various scenarios and edge cases

Haiku is suitable because the model isn’t the bottleneck for this type of application—careful implementation of tax logic and thorough testing are what matter most. If you need real-time processing of large datasets or complex multi-step reasoning chains, you might consider a more capable model, but for typical tax planning workflows, Haiku works well.

Notice what’s happening here: I’m not writing code. I’m interrogating the model, validating assumptions, and testing the system design alternatives.

The first coders were mathematicians.

In the 70s, the systems analyst emerged—someone who spoke with users and wrote specifications for coders.

As programming languages evolved, people became ‘programmers’ and expanded their activities into design, code and communication with other humans.

A great programmer is great at design, code and communications.

If that sounds hard - it is.

In 2022, with the launch of ChatGPT that changed again.

AI reset the division of labor between humans and machines.

But it’s more complex than that.

In the next section - I break down human and AI work.

Humans and AI - the division of labor

There are 2 kinds of activities in writing software - human-facing and AI-facing.

Human-facing activities

Stakeholder alignment - getting other people to agree with you and support your project

Market discovery - discovering if other people will use your product

Business tradeoffs - buy vs. build, do-nothing vs. do-something

Regulatory strategy (healthcare, MedTech, drug development, energy …) - how do you thread the needle with the regulator? FDA Cyber regulation for medical devices requires traceability of cyber testing and explanation how a control has been tested and validated to work.

Business model validation - understanding from other people if they want your product, when they’ll want it and how much they’d pay

Capital allocation - getting other people to fund your project

AI-facing activities

Code generation - prompting the LLM to generate code for your task. Emphasis here on you focusing on the desired outcome in your prompt. Specifically -

Write a very specific story of the outcome you want and needDo not be tempted to talk about the process, the how, your ideas - let the AI decide how.

Front-load your critical constraints - in time, money and results

🔥Every ambiguity = risk on fireTest generation - the latest AI models figure this out and often suggest tests

Build pipelines - with memory the AI model usually knows how to do this

DevOps - prompt the AI for what you need - this is a lot like code generation activities

Refactoring - an area where AI is exceedingly better than humans, although when it gets it wrong, it gets it very wrong.

Static analysis - like refactoring

Is the dialog ever equal?

It depends.

In personal productivity applications - AI dominates. In enterprise systems - humans dominate.

Let’s see why.

Does AI eliminate programmers?

The short answer is no.

In personal productivity applications (e.g. writing social media posts, or filing invoices from your email), the human cost of prompting is low and the payoff is high for AI agents to do the work:

You make your own tradeoffs

You write specs, AI writes code

You can (and usually) ignore risk

In enterprise systems (e.g. airline reservations systems or healthcare information systems), the human cost is high, relative to the cost of AI tokens. You need to execute all 6 of the human-facing activities, often over long periods of time to sustain your project.

You don’t make tradeoffs alone inside the enterprise. You need organizational politics to get buy-in for your idea.

You need to align with other humans, comply, and document

You need funding

You cannot ignore cyber and privacy risk, internal data governance and industry and regional regulations.

In enterprise systems, humans lead the dialog.

In personal productivity apps, AI leads the dialog.

Like when you were a kid with your parents, there is a division of dialog.

In my family, my Dad led the dialog on science and my Mom led on homework.

We’ll close the essay with some thoughts on software risk for AI-human software development.

AI prompting for risk analysis in software development

How will AI-human teams create secure software?

I will go into more detail in a future essay but here’s a teaser.

Secure software will be more about human story-telling and less about machine engineering.

Parallel to the AI-human build dialog, we need to conduct an AI-human attack dialog.

Write a story of how you attack your own system

Front-load your critical constraints

Be a ruthless 5-year-old who breaks stuff

Every ambiguity = risk on fire

And not wait for the FDA to remind us about cybersecurity.

Outro

Ada Lovelace died in 1852. Her name lives on in the Ada programming language, designed in the 70’s for the U.S. DoD for safety-critical, embedded software.

It’s still used today in defense and aerospace where failure is not an option.

Every year on the second Tuesday in October, the contributions of women to science, technology, engineering, and mathematics (STEM) are celebrated on Ada Lovelace Day.

Let’s celebrate Ada Lovelace today for her incredible vision fusing human creativity with machine computation.

We’re still learning how to build that partnership.

In enterprise systems, humans lead the dialog.

In personal productivity apps, AI leads the dialog.

Do humans or AI lead in your own work?